DevExtreme - Real World Patterns - Event Sourcing Architecture

This post is part of a series describing a demo project that employs various real-world patterns and tools to provide access to data in a MongoDB database for the DevExtreme grid widgets. You can find the introduction and overview to the post series by following this link.

I have created another new branch for the demo that implements Event Sourcing on top of the CQRS pattern that was part of the concept from the beginning. You can access the branch by following this link.

Event Sourcing

The idea of the Event Sourcing pattern is to store actions, or events, instead of data that changes in-place over time. Imagine you didn’t have a database that contained the current state of all your business data. With Event Sourcing, you (may) only have a log of all data-relevant actions that ever occurred in your system. This log can only be appended to, and by replaying the actions in the log, you can arrive at the current state of an entity - or any other state the entity had at any point in time.

Note that the terminology used by different authors to describe entities is somewhat ambiguous. Sometimes such entities may exist in memory in the shape of domain objects, and this term is often used. The term aggregate is also frequently used to capture the idea that some data structure aggregates information from events flowing through the system.

At this point of my description, entities exist only as in-memory data that may be kept by the Event Sourcing system to reflect the current state and to avoid having to regenerate entities when new events arrive. Sometimes, snapshots may be used to persist state at certain points of the event log. This can be useful to save time when the system is restarted, because then the number of events that need to be replayed to arrive at the current state is smaller.

The final item I’ll mention here (and I’m not even making use of that in my demo) is the projection. Event Sourcing systems support projection definitions, which facilitate queries - they represent another structure of in-memory data whose shape is defined according to specific query requirements, and which are maintained continuously as events are triggered.

For more general information on Event Sourcing I recommend you read Martin Fowler’s article as well as this description on Microsoft’s website.

Using a read model

For the querying requirements of my demo application, the projection technique is not a good choice. Since queries depend on user interaction and are fully dynamic, it would be impossible to maintain projections for all query option combinations. Theoretically, a projection could be created dynamically when a query is run, but that would mean replaying the event log for each query. This would not result in satisfactory performance.

Instead, I decided to generate a persistent read model for the queryable data. This is a common approach for various scenarios. In a real-world application, you could choose which parts of your data require read models, and these models could take shapes that are specifically adapted to the requirements of the queries you anticipate to run against them. In my simple case, I decided to persist the read model in the same structure I was previously using for my persistent data, so that my querying logic would run against it without change.

Architecture

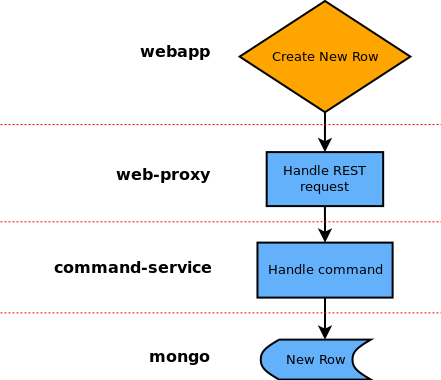

To visualize the changes in the system, I created a flowchart of the process that begins with the user creating a new row in the front-end application. Here’s the straight-forward implementation that was used in the original branch of the demo:

The arrows in the image denote messages being sent from one service to the other, and it is a linear process that leads from the front-end to the persistent storage.

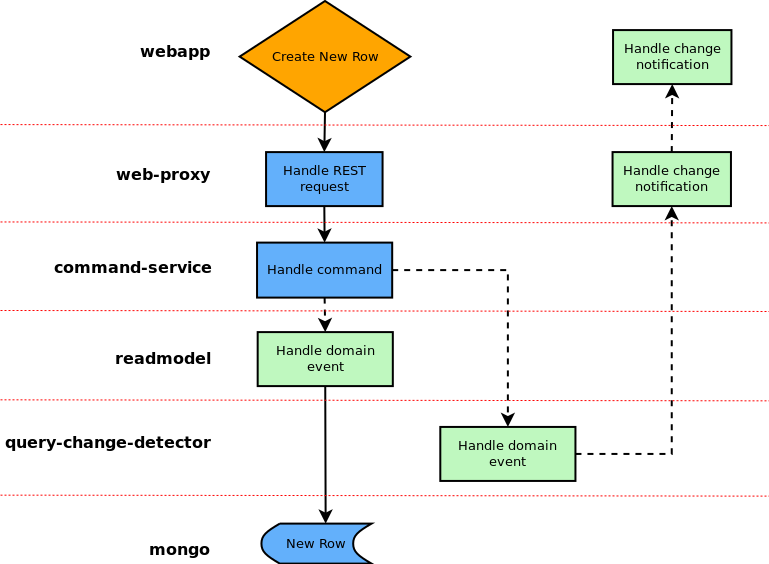

In comparison, here is the flowchart for the new implementation:

This time, the command service doesn’t contact the mongo service directly. Instead, it raises a domain event (events are denoted by the dashed arrow lines), which is handled both by the readmodel service and the query-change-detector. Through the readmodel service, the data is persisted as before (again, my decision at this point to keep the data structure the same).

On the basis of the domain events raised in response to commands, there is now an easy way of tracking changes in the system. I decided to utilize this to provide query change tracking for the front-end application. The query-change-detector monitors the domain events and checks whether they influence a query that has been registered with the service (this happens at an earlier stage, when the query is first run). In that case, the service sends a message to the web-proxy (this is logically an event message, denoted as such), which forwards the information to the front-end.

Note that both diagrams skip a step made by the web-proxy, where the new data is sent to the validation service before anything else happens. This step is not relevant to the discussion in this post.

Implementation

For more details about the implementation of the architecture I described above, please see this follow-up post.